Business ethics remain the most undervalued facet of organizational success. Yet, they extend beyond the confines of the organization and appear in UX design. Where the manipulation of ethical principles can severely undermine the user experience.

Over three-quarters of America’s largest corporations are integrating ethical decisions. Which is introducing frameworks into their organizations, implying a desire to be “better.”

The digital sphere is no different which is why the value-sensitivity of designs has led to major debates. The discussion is surrounded by the development of technology and its ethicality.

The concern isn’t that online design practices are illegal. Most of the time, they aren’t. However, knowledge is a powerful tool that can either be used for good, or for selfish purposes.

In the field of digital marketing, knowledge is frequently used to misinform, mislead, and even manipulate users. This includes making decisions they would not have made otherwise. It is where a seemingly harmless practice crosses the line into unethical behavior. Which, in turn, leads to a slew of harmful practices directed at Web users.

What Are Ethical Issues in Design?

Design is the undisputed backbone of any successful website, product, or application. It is the key driver of user behavior. Consumer trust and brand reliability being specifically important factors in marketing decisions.

Sadly, these decisions aren’t always in the user’s interests. Such practices known as dark patterns, are UI/UX ploys that target a subset of design psychology. They are designed to mislead or trick users into making an undesirable action. In 2010, design consultant Harry Brignull coined the term whilst gauging ethical frameworks.

Several practices fall into the ‘dark patterns’ category notably:

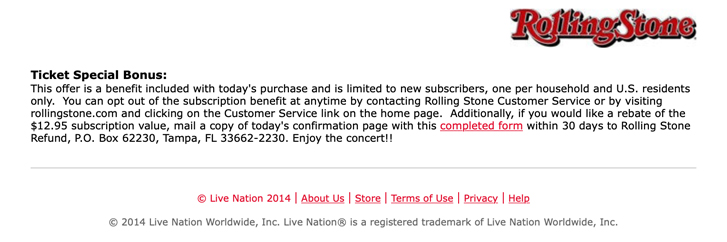

The Roach Mote

Ever been in a situation where you want to download a particular software program? And its partner software is auto-installed because you didn’t notice a small, pre-ticked checkbox in the corner of the screen?

If so, you’re already familiar with what a roach motel looks like.

These situations created by designers have easy opt-in systems and difficult opt-out systems. This less-than-ethical business practice is employed to generate increased revenue. While also garnering more subscriptions and receiving more clicks.

Manipulating User Behaviour

In a world where user data is abundant, companies engage in user behavior analytics. This is to improve their understanding of the user experience.

There is a grey area surrounding practices like user behavior tracking and the tools that supplement it. Such practices relate to the understanding of how a consumer’s mind works. So that they may be easily swayed by tactics that are to push them to act a certain way.

This, by no means, indicates that businesses engage in psychological manipulation. Yet, certain practices have the potential to make users feel misled. This can be a harmful result to an organization’s ethical integrity.

Tools like Hotjar, Clicky, and CrazyEgg are absolutely harmless on their own. Yet, they offer the potential to be used in harmful ways. Their deliberate misuse can trigger a sense of customer conditioning or stimulus-induced behaviors. This may easily lead to a sense of customer anxiety or even anger.

A prominent example of this includes the growing phenomenon of fake news. This is where the fabrication of news misguides viewers. This may force viewers into behaving in ways that are in accordance with the way the news is reported.

Users establish trust with a website, based on three key outcomes:

First and foremost, were their actions what they intended? If not, did they freely consent to them, or were they coerced into it?

Secondly, could the outcome be reasonably predicted? If not, what happened? Was it a genuine mistake, or was the situation designed to make that mistake?

Thirdly, is there any step of the process that seems unethical at face value? The user will ask “was my user behavior manipulated in any way?”

This places a significant amount of responsibility on UX professionals. The goal is to create user experiences that do not influence their users’ actions. That, too, is in ways that are not perceived as negative or harmful.

Surveillance and Privacy

Many people’s lives have become easier as surveillance technology has advanced. People of all ages, without regard for geographical limitations. Surveillance equipment assists the elderly, and monitors that track babies’ movements. Another example of improvements includes software like Car Connection. This helps parents monitor their children’s driving. UX professionals have certainly made great contributions. To ensure that technology is more than just user-friendly.

It is important not to let these stories deceive you into believing that it’s all sunshine and roses. There remain real concerns about the use of surveillance for personal gain. Shoshanna Zuboff’s book “The Age of Surveillance Capitalism” introduces the concept of “surveillance capitalism.”. The retired Harvard professor sold the notion that your data is treated as a commodity for sale in a market. After which organizations are to engage in data manipulation on a grand scale.

Despite the best efforts to provide a pleasant user experience. UX professionals must accept some responsibility when using these products and services. This pertains to the use of data to power these processes. A prominent example of user data being used nefariously is Facebook’s data scandal. That’s where Cambridge Analytica accessed approximately 87 million of its users’ personal data. This was due to the absence of consumer data protection and a lack of developer monitoring. This raised legitimate concerns about the ethical frameworks. These are to be followed by corporate technology firms. As well as the government’s plans for the need for greater oversight and regulation.

Truthful Statistics

Visualizing data allows people to gain knowledge from data.

The methods for visualizing data range from standard scatterplots to intricate interactive systems. Which conveys the purpose and meaning of the data more easily.

The approach to this visualization can influence how people perceive the data. In designing elements for an apt depiction of data, a crafted combination is required.

Often when data is collected, it confirms some parts of a specific study and rejects others. Due to factors like sample size, the results can land on a spectrum of accuracy. Results that only represent a part of the sample size don’t communicate an accurate picture of reality.

Data is manipulated or misrepresented to depict a distorted reality. Unfortunately, researchers take advantage of such opportunities. It is to give the impression that their findings reflect a more significant reality than they do. In the name of ‘cleaning data,’ they may also trim data or outlier results, skewing the results in one direction.

These are not strictly categorized as illegitimate techniques in influencing user behavior. However, there still needs to be a conversation about their application. The occurrence of errors or deliberate oversight may jeopardize research objectivity. This is due to inherent biases or the intention to manipulate user behavior.

Reliable Testing

Any digital product that is released in the market needs to be held to a very high standard. So to ensure more efficient innovation – and to protect the consumer experience.

The process of testing products during and following development can be biased. It may lead to making overambitious claims to the consumer. Consumer expectations may be jeopardized when testing with the incorrect sample size. For example, conducting alpha testing with people who have greater technical expertise. This or testing with a small number of consumers can result in an unsuitable final product.

Furthermore, before marketing the product, a trial should be carried out during development. That is to ensure that it performs all of its intended functions. It could be harmful if a product fails to meet consumer expectations but is still released to the market. This could be due to a biased testing group or an ineffective white and black box testing method.

Distraction in Interfaces

Distraction is a risk with the same features that make user interfaces easy to use. This can be off-putting at best, and even life-threatening at worst.

Using GPS on phone, for example, makes driving to unknown locations convenient, right? Well, that same phone can be the cause of distracted driving. Which sadly claims thousands of lives every year.

Similarly, user interfaces can be distracting. To divert users’ attention from the information it obscures their reading habits. This directly contradicts the visibility principle. This means that UI design should be done in a way that keeps relevant information visible and accessible. That along with not distracting the user from the website’s purpose. The goal is to provide users with clear and transparent options. Without overwhelming them with unnecessary information.

The term “hidden cost” refers to the deliberate practice of deceiving customers. This is done by displaying low prices that do not include the product’s other costs.

This tactic is used to trick users into purchasing seemingly inexpensive items. Where users are trapped in a situation where they must purchase other items to boost revenue. UX designers should be wary with product placement on pages so that the users are not distracted. This is especially important for e-commerce interfaces.

Users may be enticed to buy quickly when faced with a situation in which a product is “nearly out-of-stock”. This could also be the case when a website gives live updates on users that have bought certain items. Situations like these, similar to the golden Quidditch ball from Harry Potter, lead users to buy fad items. In this scenario, individuals are compelled to purchase a product because everyone else is. UX designers should try to make sure that their interfaces are truthful and are steer clear of any inadvertent attempts at deceit.

Distractedness is usually associated with being the fault of users. However, the role of UX professionals in ensuring its minimization must be acknowledged. Transparency, honesty, and a user-first mindset are important principles for UX designers. They should turn back to the key principles whenever confronted with difficult choices.

Build Trust With Ethical Practice

Architects are responsible for designs that assist the livability of people in homes. While people are responsible for the information architecture of the internet. Similarly, UX designers are for the “livability” of people online.

What should this information architecture look like?

Dishonesty, manipulation, and invasions of privacy are unquestionably bad places to start.

Information architecture should foster user trust to maintain a moral system for UX design. This can be carried out by providing important and pertinent information about the service. Along with creating interfaces that reduce distractions and being open about their policies.

After all, an ethical business is already a successful business by winning both the support of its users and the wider public.